geohash vs quadtree vs r-tree - three ways to find what's near you

every time your phone shows "5 ubers within a mile" or yelp pulls up restaurants near your dot on the map, somewhere in the backend a system is solving the same problem: given a point on earth, find all the other points close to it, fast.

the naive answer is to scan every row in your database, compute the haversine distance to the query point, and return everything within some radius. this works when you have 100 points. it absolutely melts when you have 100 million.

so we use spatial indexes. three come up over and over: geohash, quadtree, and r-tree. they all partition space, but they do it in fundamentally different ways — geohash encodes points into sortable strings, quadtree carves space into rigid quadrants, r-tree wraps data in flexible overlapping rectangles. let's walk through each, end with a concrete yelp example, and figure out which one to reach for when.

the problem they solve

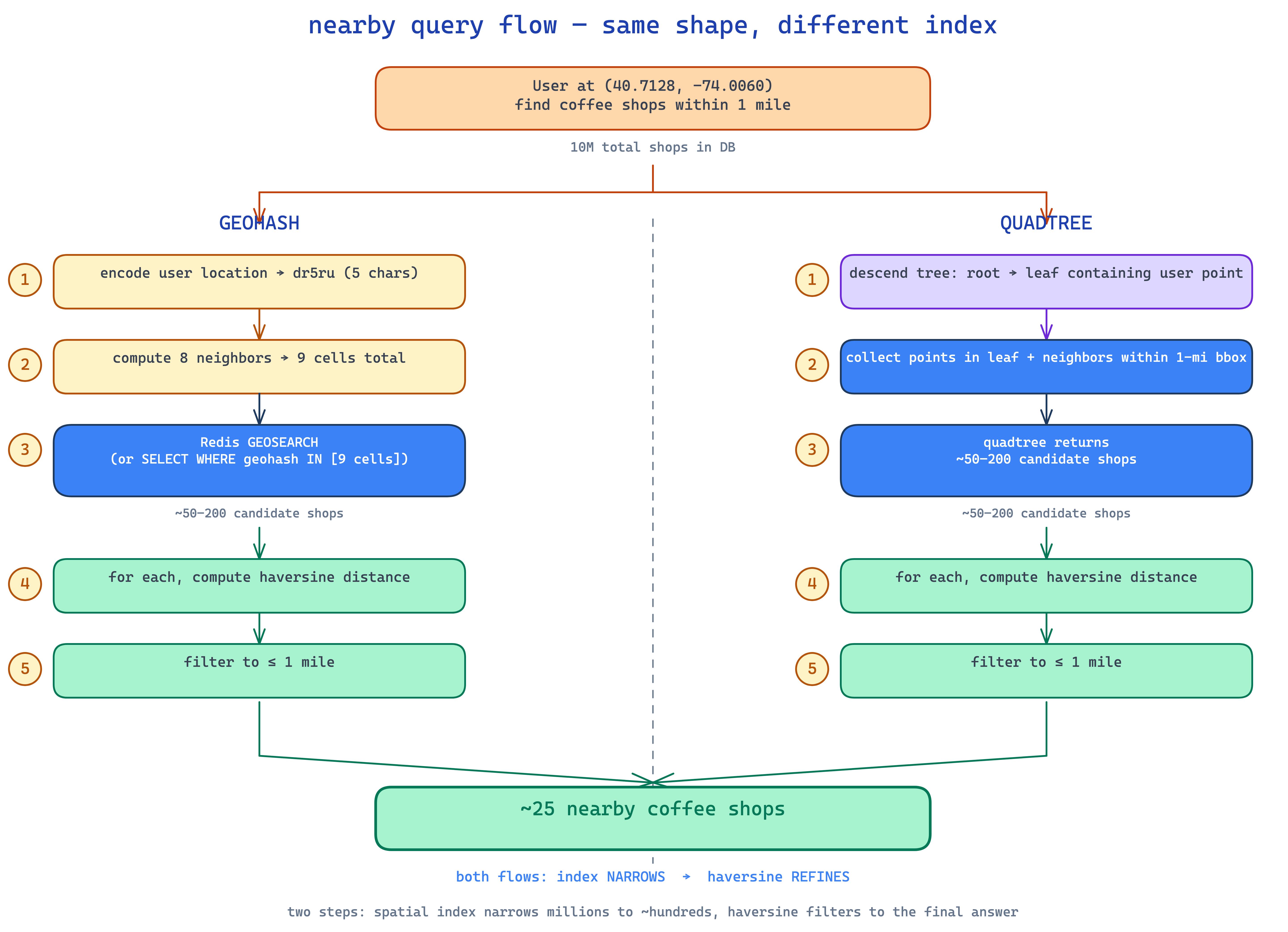

okay. concrete. you're building yelp. user opens the app at lat 40.7128, lon -74.0060 (manhattan). they want "coffee shops within 1 mile". you have 10 million coffee shops in your db.

a brute-force SELECT * FROM shops WHERE distance(lat, lon, 40.7128, -74.0060) < 1 does 10 million distance computations per query. dead. you'd burn through a server cluster before lunch.

what you actually want is: narrow the search to ~50 candidate shops in O(log n), then run the precise distance check on just those. that "narrowing" step is what spatial indexes do.

geohash, quadtree, and r-tree are three different bets on how to do the narrowing.

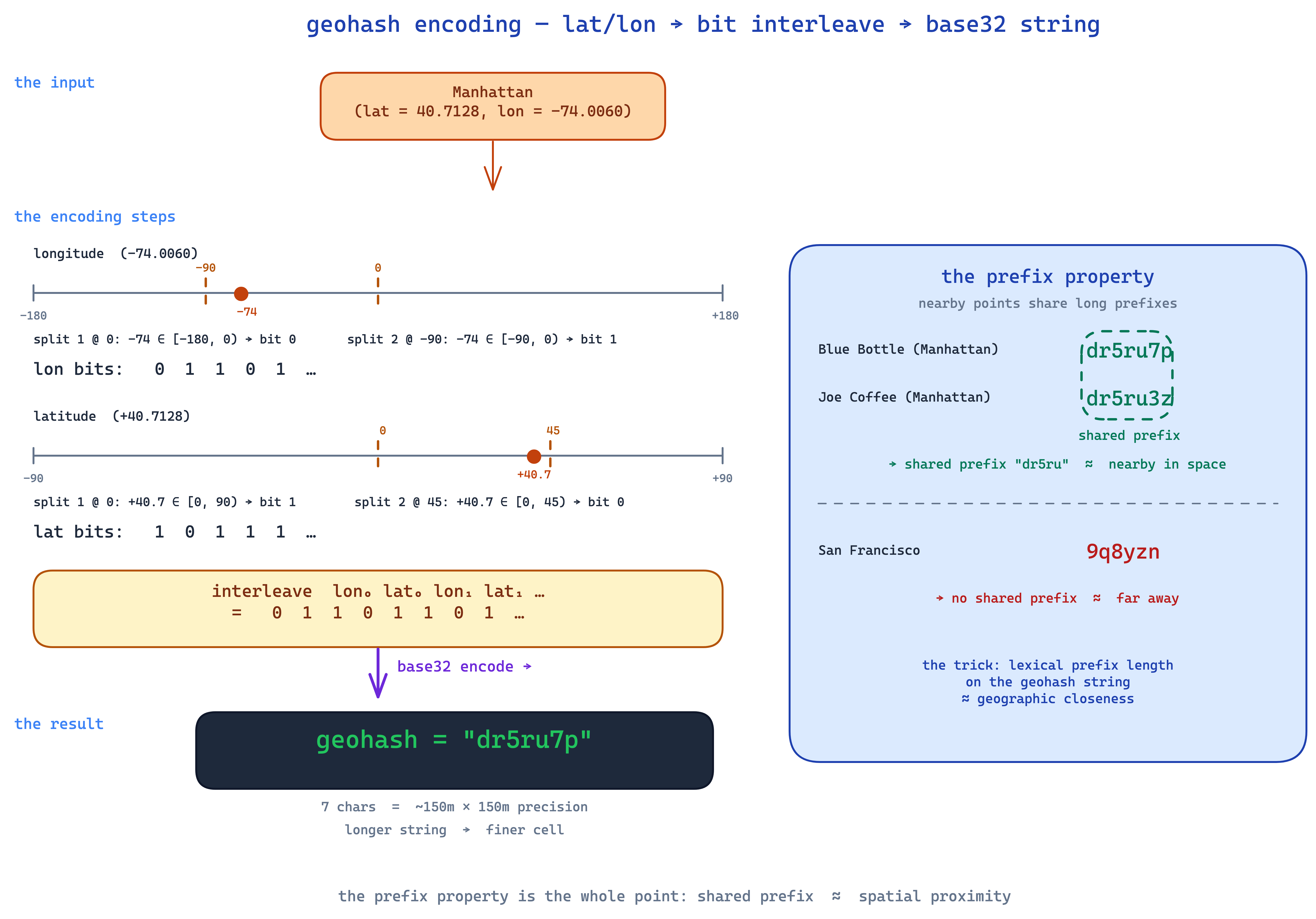

geohash — encode space into a string

geohash converts a (lat, lon) pair into a short base32 string like dr5ru7p. the magic is the prefix property: two points that are close on earth will, most of the time, share a long common prefix.

so dr5ru7p and dr5ru3z are nearby (both share dr5ru). dr5ru7p and 9q8yzn are very far (no shared prefix — different sides of the country).

how it actually encodes

geohash interleaves the binary representations of latitude and longitude. you take lat (range -90..90), repeatedly split it in half, write down whether your target is in the lower or upper half. same for lon (range -180..180). interleave the bits, encode in base32. each character adds about 5 bits of precision.

precision by length:

| length | cell size (approx) |

|---|---|

| 4 chars | ~39 km × 19 km |

| 5 chars | ~5 km × 5 km |

| 6 chars | ~1.2 km × 0.6 km |

| 7 chars | ~150 m × 150 m |

| 8 chars | ~38 m × 19 m |

so for "coffee shops within ~1 mile", a 5-character geohash bucket is about right. you store every shop pre-computed with its 5-char geohash, then the query becomes "give me all shops where geohash = 'dr5ru'."

why redis loves this

geohashes are sorted strings. they fit perfectly into a redis sorted set, or any b-tree index. redis actually has built-in commands for this — under the hood it's just a sorted set of geohash-encoded scores.

GEOADD shops -74.0060 40.7128 "blue-bottle"

GEOADD shops -74.0050 40.7130 "joe-coffee"

GEOSEARCH shops FROMLONLAT -74.0060 40.7128 BYRADIUS 1 mi

dead simple. no postgis, no kd-tree, no custom service. just a sorted set with magic strings.

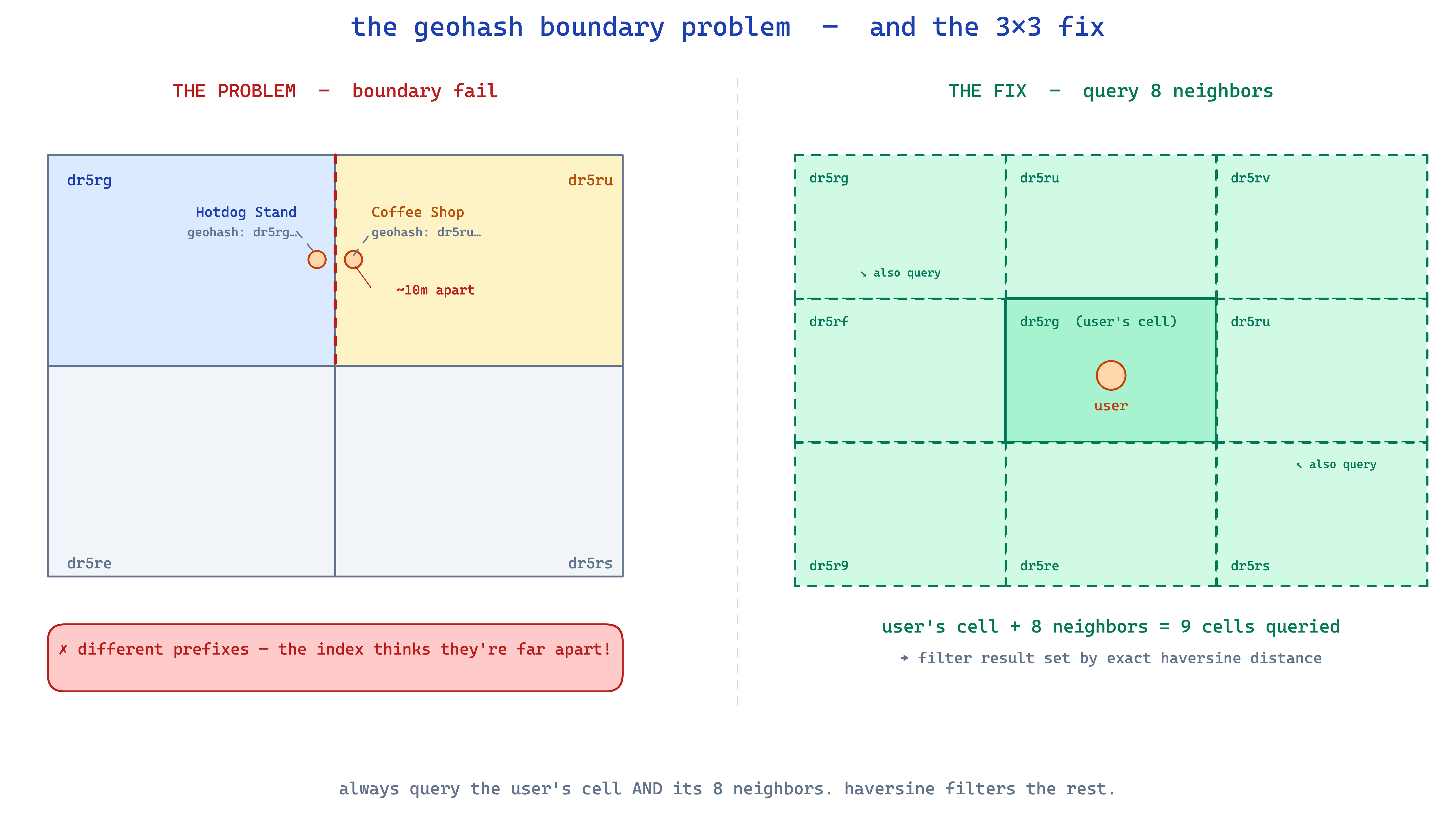

the edge case nobody mentions on day 1

geohash has one big gotcha: points right next to each other can fall in different cells.

imagine the cell boundary runs right through times square. a hotdog stand 5 meters east of the boundary has geohash dr5ru7. a coffee shop 5 meters west has geohash dr5rgz. they're 10 meters apart, but their geohashes don't even share the second character.

the fix is mandatory: always query the user's cell AND its 8 neighboring cells. there are well-known formulas to compute the 8 neighbors of any geohash, so this is cheap. you end up querying 9 cells instead of 1, then filtering the result with exact haversine distance.

so the real query loop is:

- compute the user's geohash at the right precision

- compute its 8 neighbors → 9 cells total

- fetch all points whose geohash is in that set

- compute exact haversine distance for each, filter to radius

- return

step 4 is the unavoidable "refinement" step. the index narrows you from 10 million to ~50–200 candidates. the haversine check filters those to the final answer.

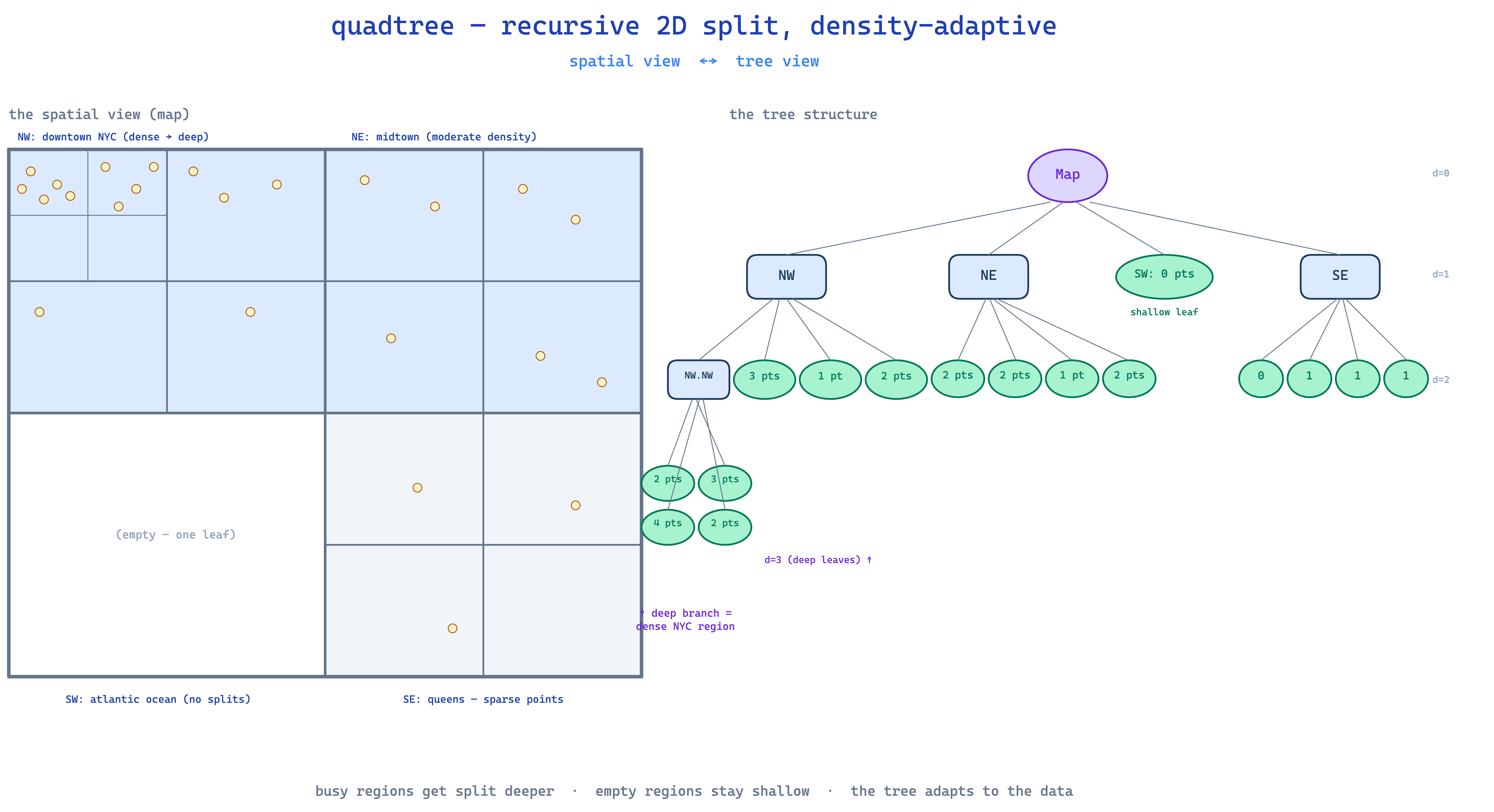

quadtree — recursive 2D split

quadtree comes at it differently. instead of encoding each point, it organizes space itself into a tree.

start with the whole 2D map as a single box. that's the root. when too many points are in one box (say, more than 4), split the box into 4 equal quadrants — NW, NE, SW, SE. each quadrant becomes a child node. if any of those children gets too crowded, split that one into 4 more. recurse.

the beauty is density-adaptive: downtown manhattan ends up with tiny leaf nodes (lots of recursive splits) while the middle of nebraska might be a single huge leaf. you're not wasting tree depth on empty space, and you're not letting busy areas get overcrowded.

the search

to find points near (lat, lon):

- start at the root

- descend into whichever quadrant contains your query point

- recurse until you're in a leaf

- that leaf contains the candidate points (and you check neighboring leaves too — same boundary problem as geohash, different mechanics)

- exact haversine check, return

each step picks 1 of 4 children, so search is O(log₄ n) — same shape as O(log n), just a tighter constant.

tradeoffs vs geohash

- density adapts automatically. if nyc has 100k coffee shops and nebraska has 50, the quadtree just handles it. with geohash, you have to manually pick a precision that works everywhere.

- memory overhead. every internal node, every pointer. for uniform-density data, geohash is way cheaper.

- harder to distribute. geohash strings shard naturally across machines (just hash the prefix). quadtrees need careful partitioning.

- rebalancing is real. as drivers move (uber!) or points get added/removed, the tree shape changes. geohash, since it's just a string per point, sidesteps this entirely.

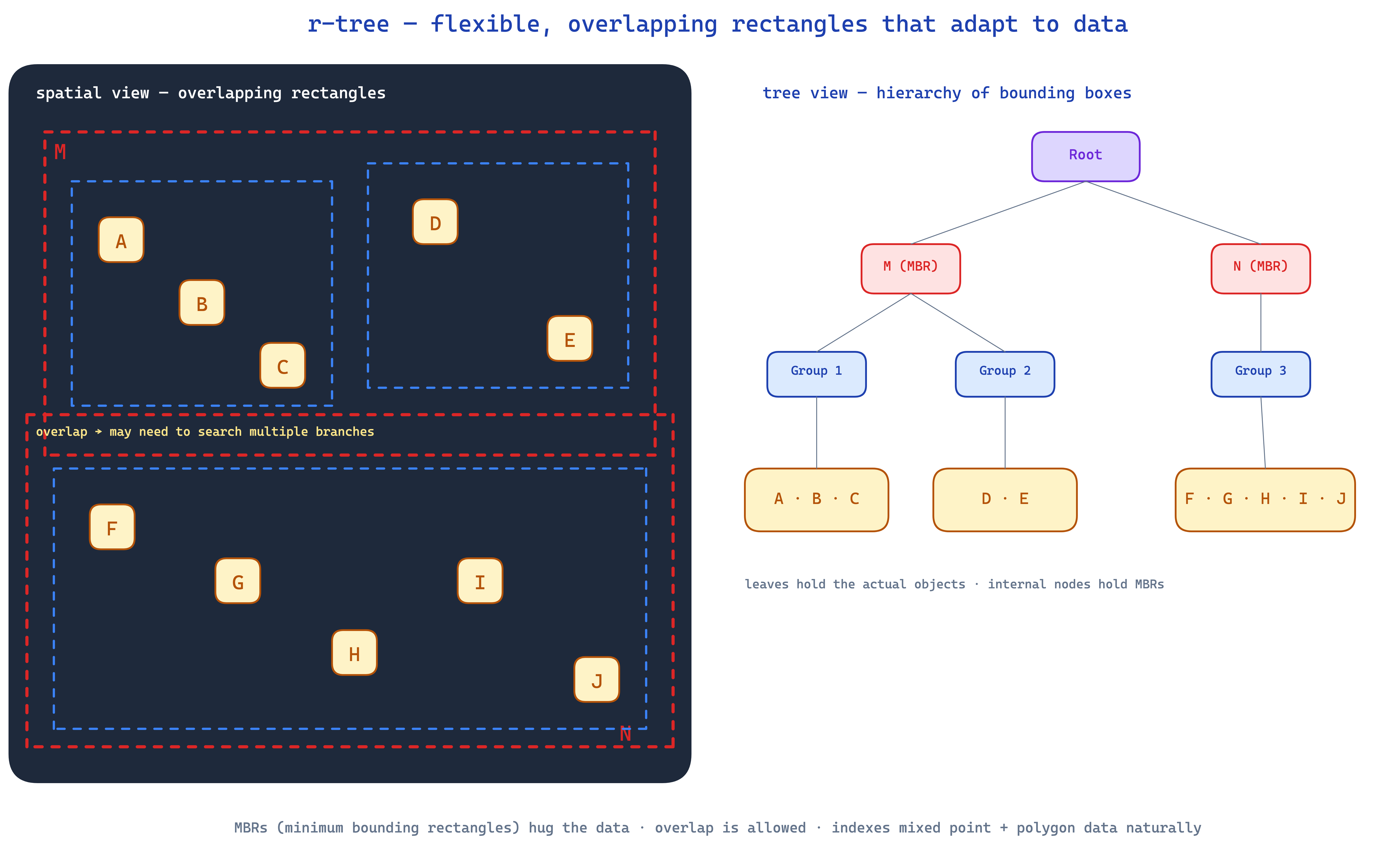

r-tree — flexible, overlapping rectangles

both geohash and quadtree split space on fixed grid lines — every cell or quadrant has a predetermined position and size. r-trees throw that constraint out. instead, they wrap the data in flexible, overlapping bounding rectangles that adapt to wherever the data actually clusters.

think of organizing a pile of photos on a table. quadtree would force you to draw a fixed 2×2 grid and keep subdividing. r-tree just lets you draw natural rectangles around clumps of nearby photos, even if those rectangles overlap a bit.

each rectangle is a minimum bounding rectangle (MBR) — the smallest axis-aligned box that contains all its children. the root has a few wide MBRs, each child has tighter ones, all the way down to leaves that hold actual data items.

why this matters

r-tree is the default in production databases. postgresql's gist indexes (and postgis) use r-trees. so does mysql's spatial index, oracle spatial, and basically every commercial spatial database. there's a reason for that.

- mixed data types. r-tree indexes points, polygons, road networks, delivery zones — anything with a bounding box — in the same index. quadtree struggles here because non-point shapes mess up the rigid grid; r-tree just expands the rectangle to fit.

- adapts to data clusters. rectangles hug the data rather than slicing space arbitrarily. fewer empty cells, fewer wasted traversals.

- disk-friendly. modern r-tree variants (r*-tree, hilbert r-tree) tune their splits for how databases actually read pages off disk. when you call

ST_DWithinin postgis, it's hitting an r-tree.

the trade-off

rectangles can overlap. when a query point sits inside two overlapping rectangles, you have to descend into both branches and union the results. lots of overlap means the index does extra work.

production r-tree implementations spend a lot of cleverness on the splitting heuristic — when a node fills up and has to split, how do you divide its children into two new MBRs that minimize overlap? this is where r-tree, r±tree, r*-tree, and hilbert r-tree differ. all the same shape, different splitting strategies.

in interviews and at the whiteboard: just say "r-tree" and you're fine. the variants matter for production tuning but not for the architectural conversation.

a concrete example — yelp "coffee near me"

let's walk through the same query with both approaches.

user at (40.7128, -74.0060) asks for coffee shops within 1 mile.

geohash version

# precompute (done once per shop, stored in db / redis)

shop "Blue Bottle" → geohash dr5ru7p

shop "Joe Coffee" → geohash dr5ru3z

shop "Stumptown SoHo" → geohash dr5rsbk

shop "...10M others"

# query time

user_geohash = encode(40.7128, -74.0060, length=5) = "dr5ru"

neighbors = ["dr5ru", # the user's cell

"dr5rg", "dr5rs", "dr5rv",

"dr5rt", "dr5rw",

"dr5rh", "dr5rk", "dr5re"] # 8 neighbors

# fetch all shops whose geohash starts with one of these 9 prefixes

candidates = SELECT * FROM shops WHERE LEFT(geohash, 5) IN (...9 values...);

# ~50–200 results

# exact distance filter

results = [shop for shop in candidates

if haversine(user, shop) <= 1.0]

simple. redis-friendly. one round trip. ship it.

quadtree version

# precompute (built in memory or stored in postgis-style index)

quadtree = build_quadtree(all_10M_shops)

# query time

user_point = (40.7128, -74.0060)

leaf = quadtree.find_leaf(user_point)

candidates = leaf.points + leaf.neighbors_within(1_mile)

# exact distance filter

results = [c for c in candidates if haversine(user, c) <= 1.0]

same overall shape, different data structure. the quadtree gracefully handles the fact that manhattan has 100x the shop density of staten island, while geohash needed you to pick a single global precision.

when to pick which

| use case | better fit | why |

|---|---|---|

| uber nearby drivers | geohash | drivers move every few seconds; just update the geohash string in redis. classic pattern. |

| tinder location matching | geohash | users update location periodically, sorted set, done |

| anything you want in redis quickly | geohash | it's literally a built-in |

| mapping with wildly varying density | quadtree | density-adaptive splits give better worst-case performance |

| physics sim, collision detection | quadtree | spatial reasoning over points matters more than encoding |

| yelp restaurant search at production scale | r-tree (via postgis) | mixed data — points + polygons (delivery zones, neighborhoods) — and you want a real db query, not just a redis lookup |

| mapping app indexing roads + POIs together | r-tree | only structure that gracefully handles points + polygons + linestrings in one index |

| postgis, mysql spatial, oracle spatial | r-tree | they literally use r-tree — that's the default |

rule of thumb:

- geohash when you need redis-fast, point-only, simple-as-possible.

- quadtree when density is wildly uneven and points are the only thing you index.

- r-tree when you're in a real database (postgis, mysql) and need to mix data types.

the universal pattern

both approaches share the same two-step structure:

- index → narrow. use the spatial structure to go from millions of candidates to a few hundred. cheap and fast.

- exact distance → refine. for each candidate, compute the real haversine distance. more expensive per-row, but only running on the narrowed set.

the index doesn't give you the right answer. it gives you a candidate set small enough that the exact answer is cheap to compute. forgetting step 2 is how you ship a "nearby" feature that includes shops 10 miles away just because they happened to share a geohash cell.

what i'd remember

- three different bets on the same problem. geohash = encoding, quadtree = rigid grid, r-tree = flexible overlapping rectangles.

- geohash is the right default for most "nearby" features built on redis or a sorted index.

- quadtree wins when point density varies a lot across your map.

- r-tree wins inside real databases (postgis, mysql) — and it's the only structure that gracefully indexes mixed shapes.

- always query neighbors, never just one cell. boundary edge cases will burn you otherwise.

- always do the exact distance check after the index. the index narrows; the distance check gives you the right answer.

- the "narrow then refine" two-step is universal. whichever index you pick, the second step is the same haversine filter.

nothing here is novel. redis has GEOADD, postgis has ST_DWithin, mongodb has 2dsphere indexes. the design is in picking the right tool, remembering the boundary fix, and not over-engineering. you're paid to solve a problem, not to ship the fanciest architecture.