Message queue

queues are one of those things that sound dead simple from the outside. you push stuff in one end, you read it from the other end, fifo, done. but the moment you try to build anything real on top of a queue, you realize there are like five layers of decisions hiding under the hood. and one of the biggest myths people walk around with is that queues are fifo. they are not. not in any system you'd actually run in prod.

so let's actually master queues. async patterns, push vs pull mechanisms, why fifo breaks the moment you have more than one worker, and where kafka stops being a "nice to have" and becomes the only sane answer.

what you'll take away

quick pointers so you know what to look for as you read:

- synchronous = whatever's slowest is your floor. one rate-limited dep can take down the whole flow. async is the escape hatch.

- enqueue is cheap, processing is async. the api responds in 200ms, the email lands 6 hours later, and for non-critical work that's totally fine.

- push vs pull is the real fork. push (rabbitmq) hides complexity in the broker. pull (sqs) gives you control and makes you write the rest.

- dedup, retries, backoff — only "free" with push. in pull-land you write all three yourself. that's the price of control.

- fifo is a lie when you scale out. multiple workers + variable processing time = out-of-order delivery, no matter what queue you're using.

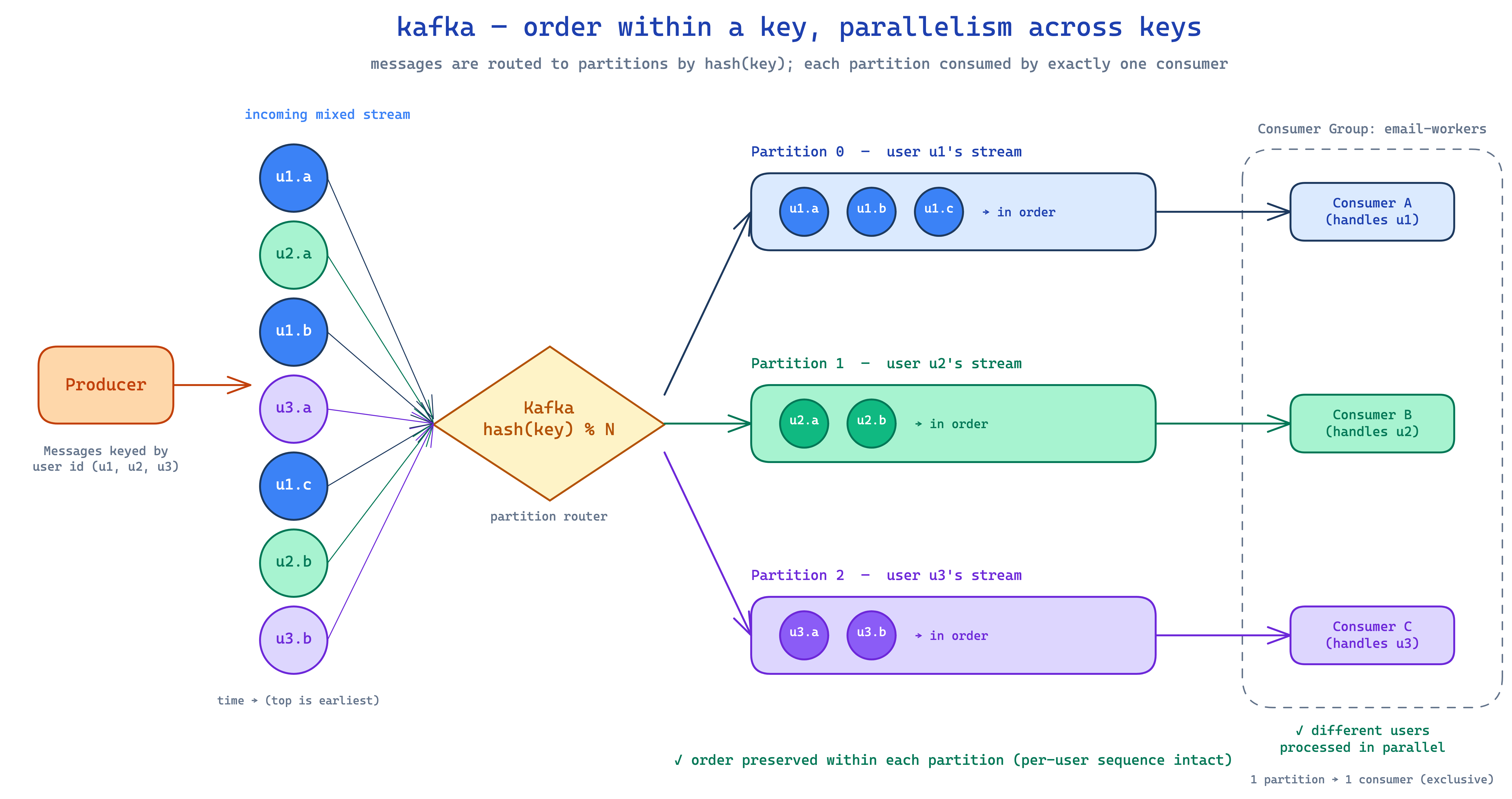

- kafka exists because ordering needs partitioning. key-based partitions guarantee order within a key, parallelism across keys.

- mix queues by use case. notifications can be push-based, video pipelines pull-based, event log on kafka — nobody's stopping you.

the synchronous trap

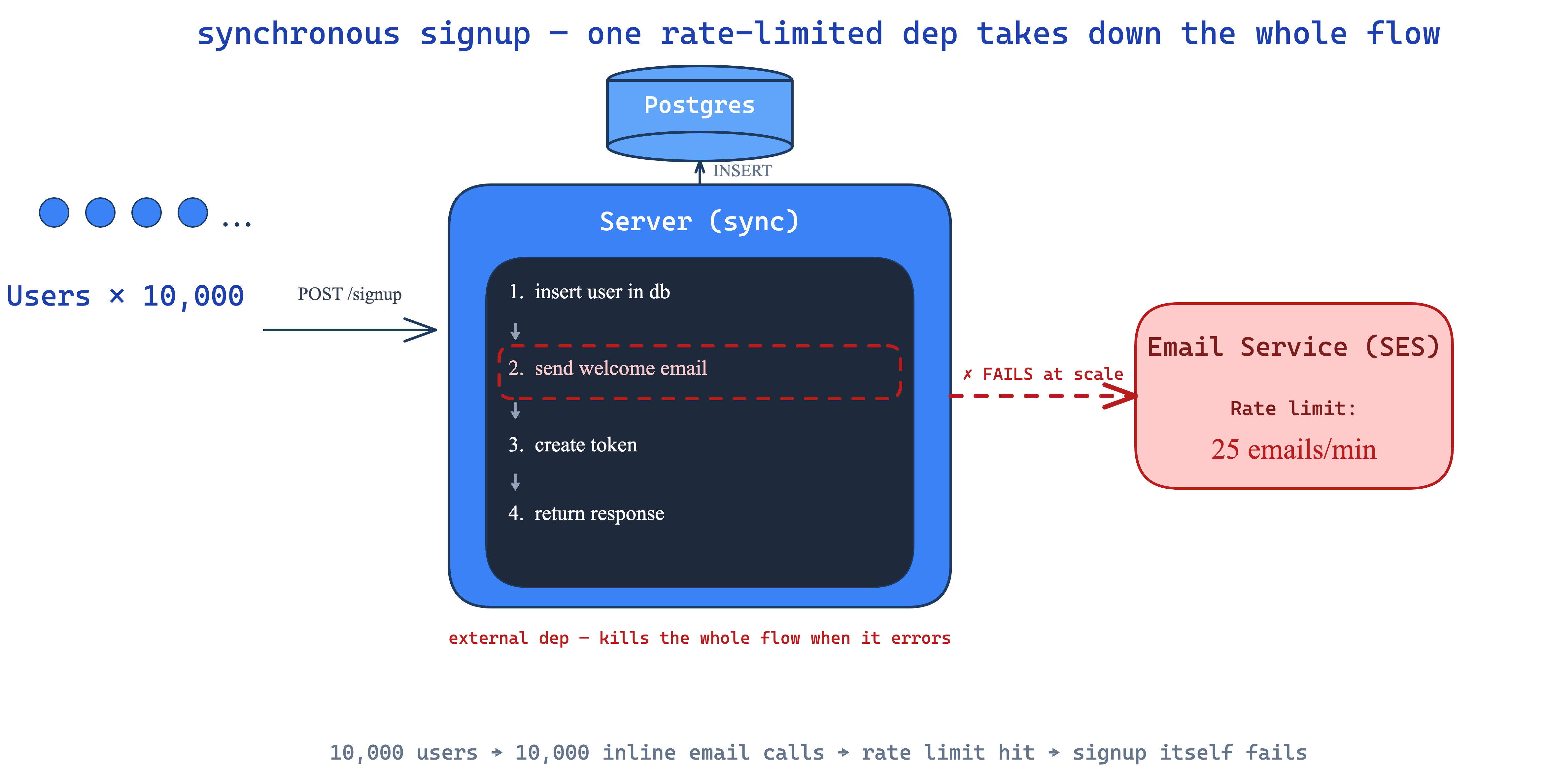

let's start with something concrete. you're building a signup flow. user hits POST /signup, you've got a server, you've got postgres, and you want to send a welcome email. easy:

def signup(req):

insert_user_in_db()

send_welcome_email()

token = create_token()

return token

four steps, all in sequence. all synchronous. and at small scale this works perfectly fine — one user signs up, four steps fire, response goes back. ship it.

now bump the load to 10,000 signups in a minute. suddenly that send_welcome_email line is the problem. two things happen.

email service is an external dep. it's somebody else's box — aws ses, sendgrid, whatever. if it's slow, your signup is slow. if it's down, your signup is down. your signup now depends on an email server. that's a wild coupling for something as fundamental as user creation.

rate limits hit. email providers cap you. let's say ses gives you 25 emails per minute, which is honestly a healthy quota. but if 10,000 users hit signup in the same minute, you're firing 10,000 email calls into a 25-call budget. the rate limiter says no. your send_welcome_email starts erroring. and because the whole flow is synchronous, that error kills the entire signup. one external rate limit takes down user creation. unacceptable.

so what's actually critical here? insert_user_in_db — yes, without that there's no user. create_token — yes, the user needs a session. return response — obviously. but send_welcome_email? the user does not need that email in the same 200ms as their signup. if the email shows up 30 seconds later, who cares. if it shows up 5 minutes later, still fine. it's a non-critical task that's been hard-coupled into a critical path.

this is exactly where async processing exists.

the async escape hatch

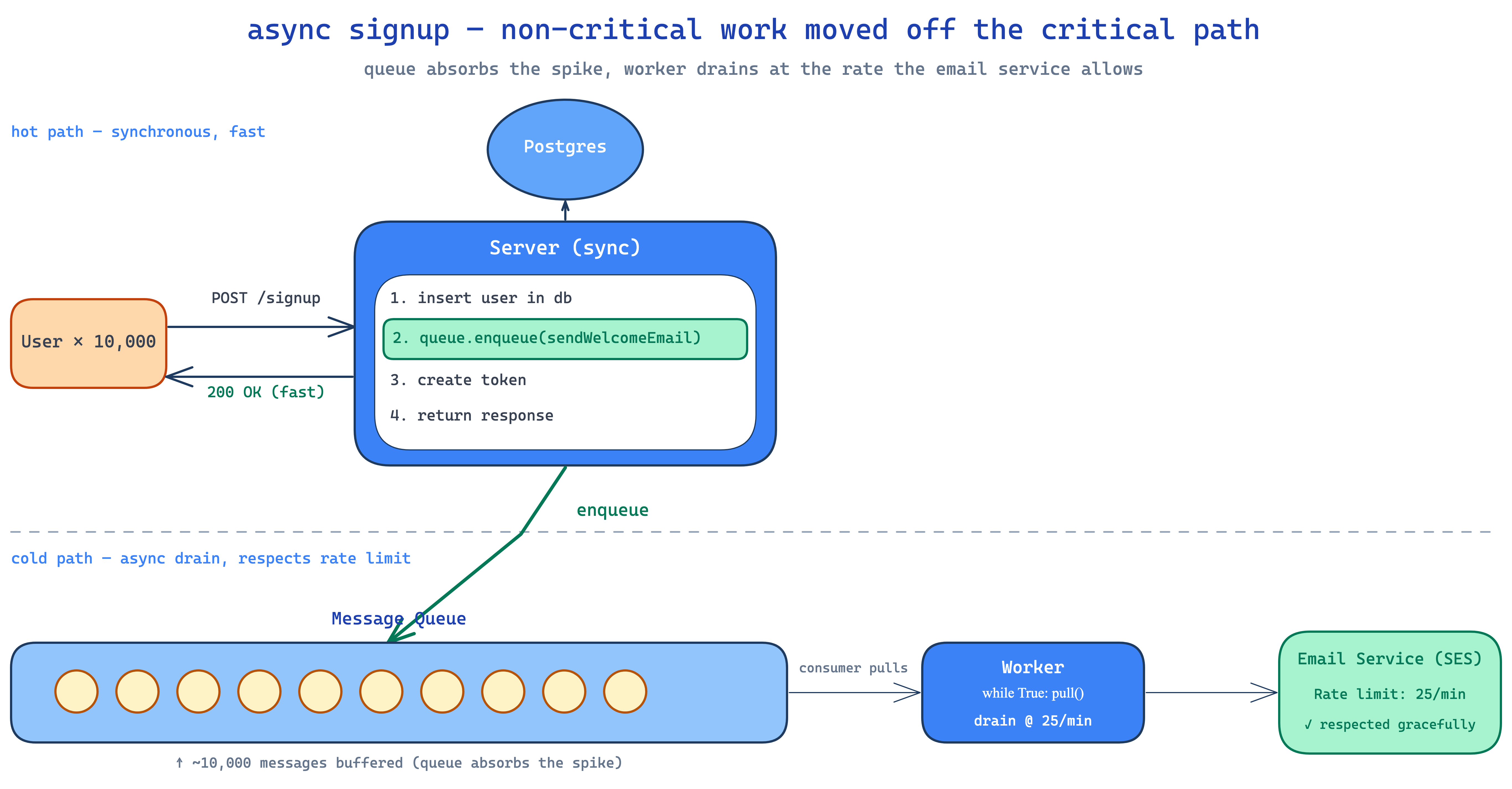

instead of sending the email inline, you enqueue the work and let something else process it later.

def signup(req):

insert_user_in_db()

messageQueue.enqueue(sendWelcomeEmail)

token = create_token()

return token

three sync steps plus one cheap enqueue. signup is fast again. the queue holds the email job. some other process — a worker — is going to pick it up later and actually call the email service. that worker can respect the 25/min rate limit, sleep when it hits the cap, retry when slots open up. the queue absorbs all the spikiness.

so if you got 10,000 signups in a minute, your queue now has 10,000 pending email jobs. the worker picks 25/min and the queue drains slowly. user #1's email arrives in seconds. user #9,999's email might arrive 6 hours later. and that's fine. welcome emails are not synchronous-critical. the same way youtube doesn't show your uploaded video instantly — it takes 10-15 minutes because some worker is processing it asynchronously. literally everywhere you look, the non-critical stuff is on a queue.

this is async processing. and queues are how you implement it.

the two ends of every queue

every queue has a producer side and a consumer side. producer is the easy part. doesn't matter what queue you're using — rabbitmq, kafka, sqs, bullmq — putting things in is always a one-liner. you call some sdk method, you give it a payload, it goes in. no surprise.

the consumer side is where it gets spicy. how does that worker actually get the next message? this is where queues split into two completely different worlds.

push vs pull — the fundamental fork

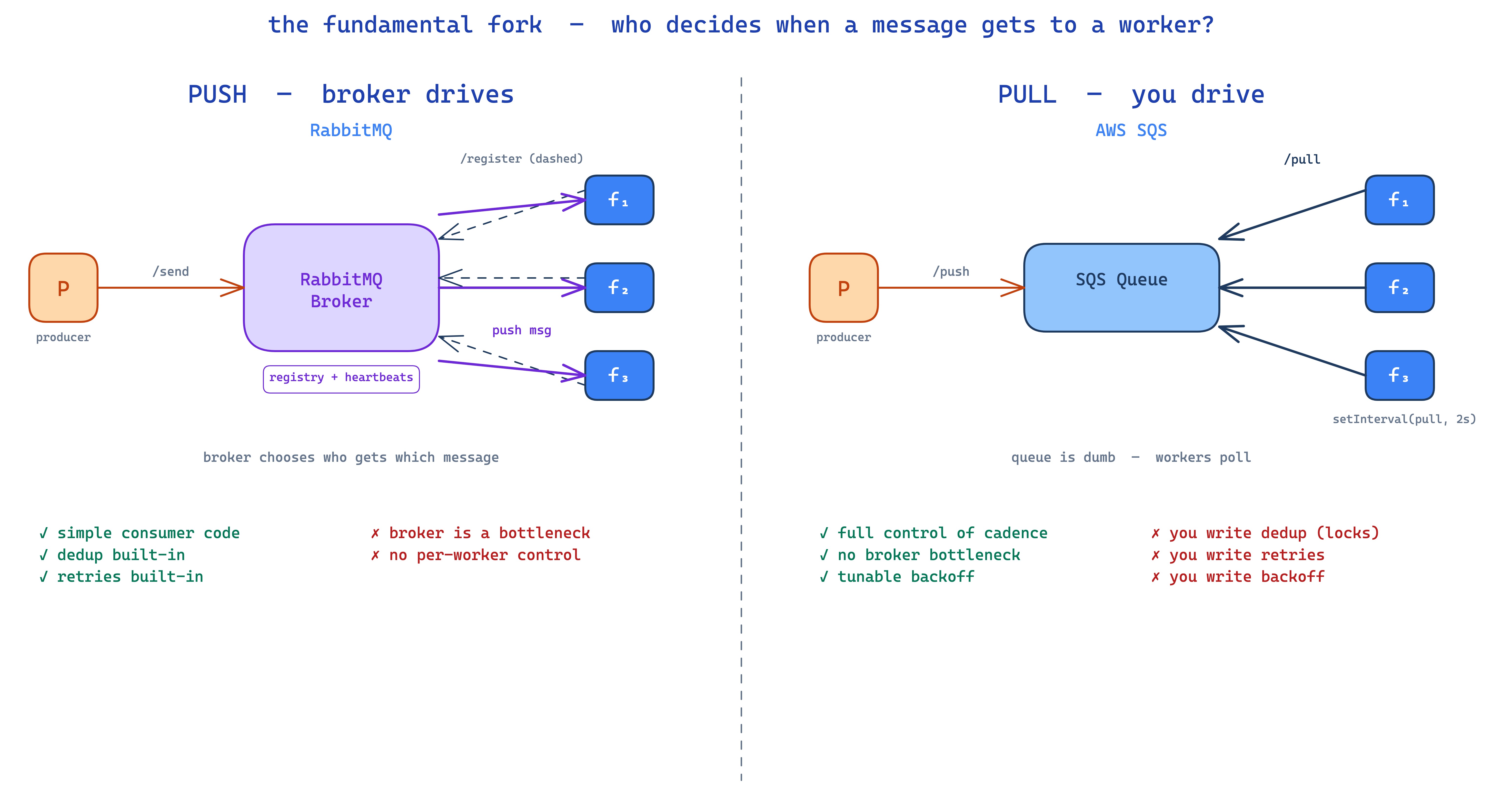

every queue is either push-based or pull-based. this single decision changes how you write the consumer, how you handle failures, how dedup works, and where the bottlenecks land.

push-based — the broker drives

push-based means the queue itself decides who gets which message and when. rabbitmq is the canonical example. here's the dance.

a worker spins up and the first thing it does is register itself with rabbitmq. it's basically saying "hey, i'm alive, i can process messages — when you have one, send it my way." rabbitmq writes that down in some internal map: worker-7 is available. the worker then sits there and waits.

every 30 seconds (or whatever the heartbeat interval is), the worker pings rabbitmq with a heartbeat. "still alive. still alive. still alive." if rabbitmq stops hearing those for more than its tolerance, it marks the worker dead and stops sending it messages. without heartbeats, a crashed worker would still be receiving work that nobody's processing — that's how you'd lose messages.

now when a producer pushes a message, rabbitmq looks at its registered live workers, picks one, and pushes the message at it. the worker didn't ask. it didn't poll. the message just shows up on its socket.

what's good about this:

- consumer code is dead simple. you literally write a callback

onMessage(msg)and rabbitmq invokes it. no polling loop. no "is there anything yet?" logic. - deduplication is built-in. since one broker is choosing who gets what, it'd never hand the same message to two workers. it can't, by definition. one source of truth.

- retries are built-in. if a worker takes the message but never acks it within some window, rabbitmq notices and re-queues it for someone else. you didn't have to write that.

what's not good:

- the broker is doing a lot of work. one box is tracking every worker, every heartbeat, every assignment. that's a real bottleneck and it slows down at scale.

- you give up control. you can't say "i want to process at exactly 50 msg/sec" — the broker decides cadence.

pull-based — you drive

pull-based flips it. the queue does nothing on its own. it just sits there. workers have to actively go fetch.

sqs (amazon's simple queue service) is the textbook pull-based system. it gives you two apis: /push to put a message in, /pull to ask for one. that's the whole interface. no registration. no heartbeats. no broker choosing assignments. workers just call /pull whenever they want.

so your worker code looks like:

while True:

msg = sqs.pull()

if msg:

process(msg)

sqs.delete(msg)

else:

sleep(backoff)

a setInterval in js land. a while True in python. polling, basically.

now everything that was free in rabbitmq becomes your problem.

dedup is your problem. if three workers all call /pull at almost the same instant, sqs might hand the same message to multiple of them. you have to handle that yourself. typical fix is a redis lock — before processing message id 7, acquire a lock on lock:msg:7. if you can't get it, skip, somebody else has it. it works, but you wrote it.

retries are your problem. if you pulled a message and crashed mid-process, you have to put it back. or build a dead-letter queue (DLQ) where failed messages go for inspection. sqs gives you primitives, not policies — you wire it.

backoff is your problem. if your queue is empty and you're polling every minute, you're burning api calls (and sqs literally charges you per call). after 20 empty polls, slow down — go to every 5 minutes. after another 20 empties, every 15. the moment you find a message again, snap back to fast polling. you have to write that backoff strategy. rabbitmq workers don't have this problem because the broker pushes when there's something to push.

so why would anyone use pull?

control. all the control is yours. you decide cadence, concurrency, retry policy, backoff, dedup strategy. for some workloads — high-throughput batch processing, video pipelines, anything where you want to tune carefully — that control is gold. and there's no single broker bottleneck because there's no broker doing dispatch.

tbh i lean pull-based for most production stuff. bullmq (which sits on redis) and sqs are pull-based and they're what i reach for. bullmq gives you nice abstractions on top so you barely notice the polling. sqs is full raw — you implement everything — but it's a fun engineering problem to solve and the operational overhead is basically zero. on a recent project of mine all the queues are sqs with a while True loop, backoff strategies, retry handling, the works. it's a vibe.

now the spicy part — queues are not fifo

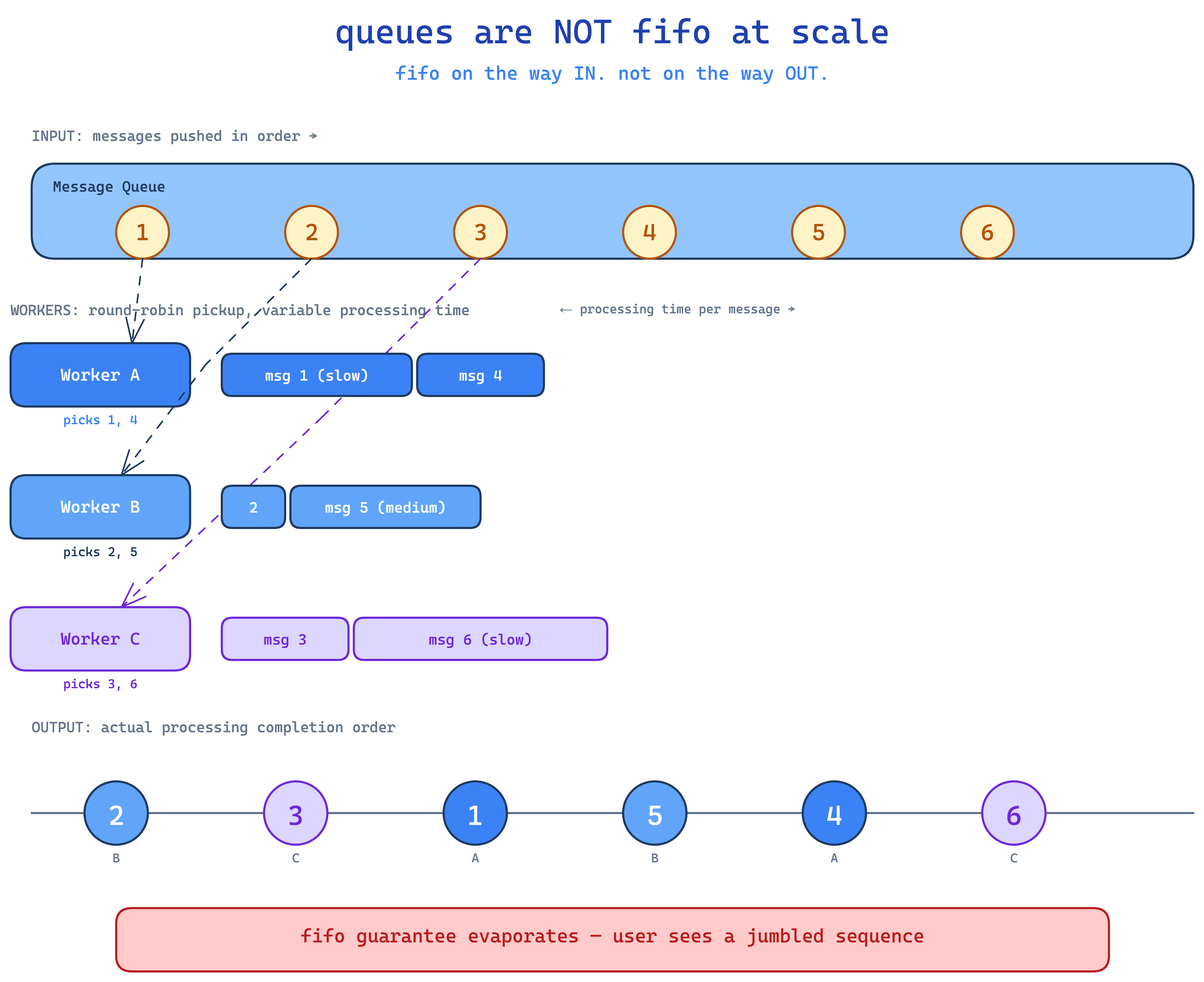

ask anyone what a queue is and they'll say "first in, first out." and yeah, that's the textbook data structure definition. but in a real distributed system, queues do not give you fifo. let me show why.

say you're building an sms onboarding flow that sends a sequence: welcome → product tour → 3-day-later check-in. order matters. the user can't get the check-in before the welcome. so you push them in order: msg 1, 2, 3, 4, 5, 6 into the queue.

if you only had one worker, fine. but you don't — you have multiple. that's literally the whole point of horizontal scaling. so worker A grabs msg 1, worker B grabs 2, worker C grabs 3, A grabs 4, B grabs 5, C grabs 6.

now what actually finishes first?

worker A's process for msg 1 might be slow (db hiccup, network jitter, gc pause, whatever). worker B finishes msg 2 first. msg 2 arrived first. then worker C finishes msg 3. then worker A finally finishes msg 1. and so on.

end result: the user got messages in the order 2, 3, 1, 5, 4, 6.

the queue is fifo on the way in. it's not fifo on the way out. the moment you have more than one worker — and you always do, because that's literally what queues are for — sequential delivery is gone.

for the welcome → tour → checkin flow, this is genuinely broken. user gets the product tour before the welcome. then the check-in before the tour. then the welcome. they're confused. you shipped a bug.

so how do you actually preserve order when you need it? hell naw to "just use one worker" — you need parallelism and ordering. that combo is the hard problem.

kafka — ordering inside a partition

this is exactly where kafka shines, and where the other queue systems quietly fall short. kafka introduces partitions and keys.

instead of one queue with everything mixed together, kafka splits the topic into N partitions. when you push a message, you attach a key — usually something like the user id. kafka hashes that key and sends the message to one specific partition. all messages with the same key always land in the same partition.

then on the consumer side, kafka has consumer groups. each partition is consumed by exactly one consumer in the group. so partition 0 → consumer A, partition 1 → consumer B, partition 2 → consumer C.

what you get out of this:

- parallelism — different users' messages go to different partitions, processed concurrently by different consumers

- order within a key — all of user 1's messages live in the same partition, processed by the same consumer, in the order they were pushed

so user 1 gets welcome → tour → checkin in the right order. user 2's messages can interleave with user 1's because they're on a different partition, but user 2's own sequence is preserved too. ordering where it matters, parallelism where it doesn't.

this is why people reach for kafka in event-sourcing setups, in pipelines that care about per-entity ordering, in anything where "process this user's stuff in order" is a real requirement. rabbitmq and sqs don't have this concept first-class. kafka does, and it's beautifully designed.

if you want to actually get good at this, kafka's consumer groups concept is worth a separate deep-dive — partition rebalancing, offsets, what happens when a consumer dies, the works. there's a lot under the hood.

the actual lesson here

queues are not one thing. they're a whole spectrum of design decisions:

- sync vs async architecture is the first call. async wins for any non-critical work.

- push vs pull is the second call. push for simple consumers and built-in dedup. pull for control and tunability.

- single-queue fifo is a myth at any real scale.

- when ordering matters, you're either reaching for kafka or rolling your own partitioning logic on top.

and here's the philosophy. there is no best queue. rabbitmq is great when you want simple. sqs is great when you want zero ops and full control. kafka is great when ordering and replay matter. bullmq is great when you're already on redis. you can mix and match — your notification system can be push-based, your video pipeline can be pull-based, your event log can be on kafka. nobody's stopping you. you are paid to solve a problem and not necessarily use the fanciest queue to solve it.