designing the unread message count

the tiny number on your messaging icon: designing the unread sender count

a system that looks deceptively simple on the surface but hides a bunch of interesting engineering decisions underneath. you know that little number on your messaging icon — the one that tells you how many new people messaged you. let's walk through how to actually build it.

what you'll take away

quick pointers so you know what to look for as you read:

- composite indexes win or lose by column order.

(to, ts, from)is one query plan,(ts, to, from)is a full scan in disguise. - it's not an anti-pattern to optimize for your most critical query. hyper-specific indexes are fine if they serve the product.

- the punching bag pattern protects critical components from redundant load. drop ops you know won't change state before they hit the core.

- start simple. measure. then optimize. don't jump to redis on day zero if a single SQL query handles it.

the requirement

you know that little number on the messaging icon in linkedin, twitter, instagram? that's not the total number of unread messages. it's the number of unique people from whom you received new messages since you last tapped on the icon.

so if your friend sends you 100 messages, the indicator shows 1, not 100. if three different people each send you messages, it shows 3. you tap the icon, the counter resets. next time someone messages you, it starts counting again.

sounds simple. let's see.

approach 1: count on the fly

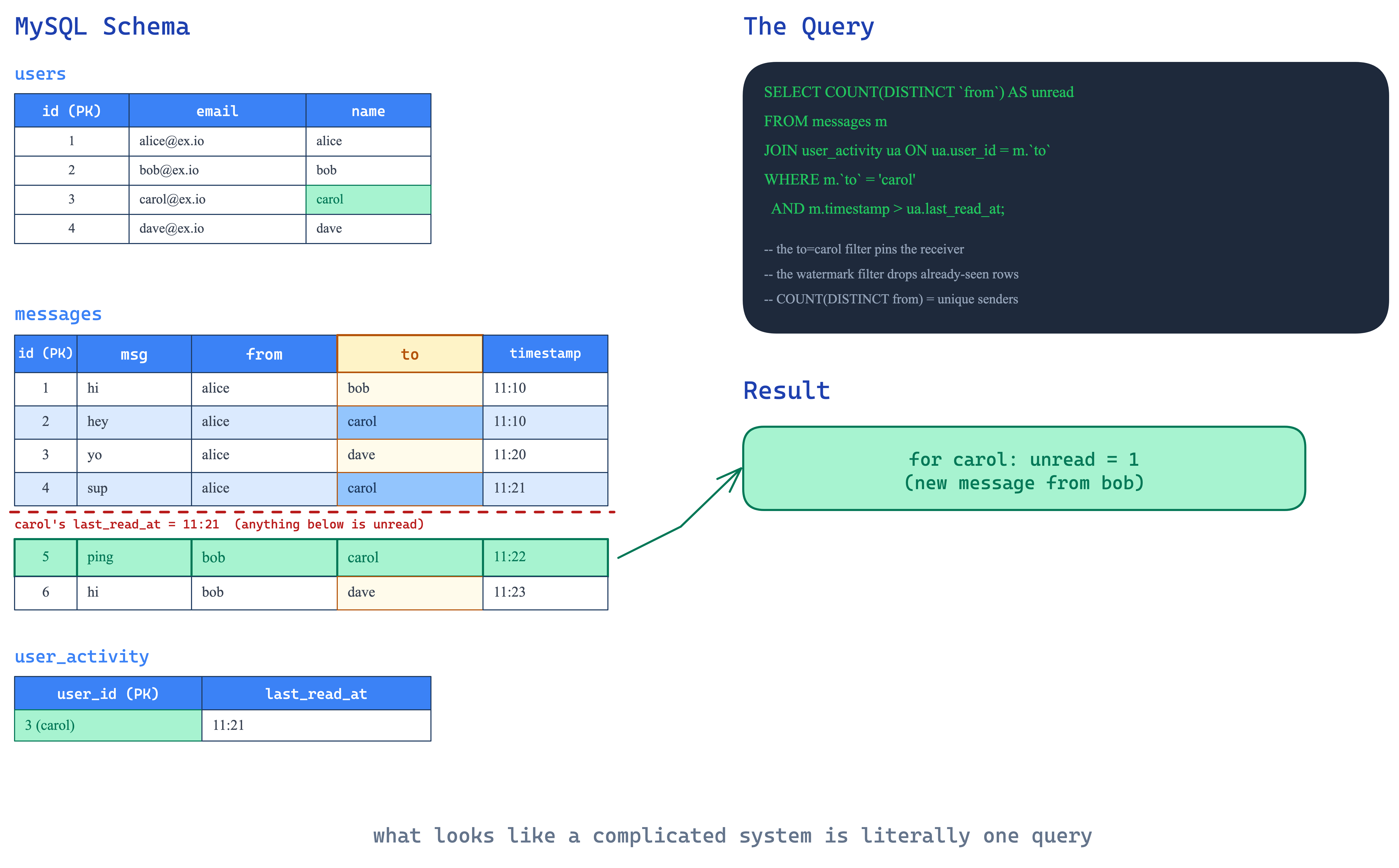

your schema in mysql (or any relational db) has three tables — users, messages, and user_activity. user_activity.last_read_at resets to current time whenever the user taps the message icon. the query to get the unread count is literally:

SELECT COUNT(UNIQUE(from))

FROM messages

WHERE to = ?

AND timestamp > last_read_at

that's it. what looks like a super complicated system is literally this one query. at small to medium scale, this works just fine.

but will this scale? that depends entirely on your indexing strategy. and this is where most people mess up.

the indexing deep dive

let's say you blindly create individual indexes on to and timestamp. seems reasonable right? here's how the query evaluates.

take user C who was last online at timestamp 11:11. the query fires WHERE to = C AND timestamp > 11:11.

the to index (B+ tree, ordered by to then id) gives you all messages ever sent to C. could be millions of rows across 10 years. the timestamp index gives you all messages after 11:11 — potentially the entire table if the timestamp is old enough. then the database does a set intersection of these two result sets to find the matching rows.

imagine millions of entries from the to index intersected with millions from the timestamp index. catastrophically slow.

composite index to the rescue

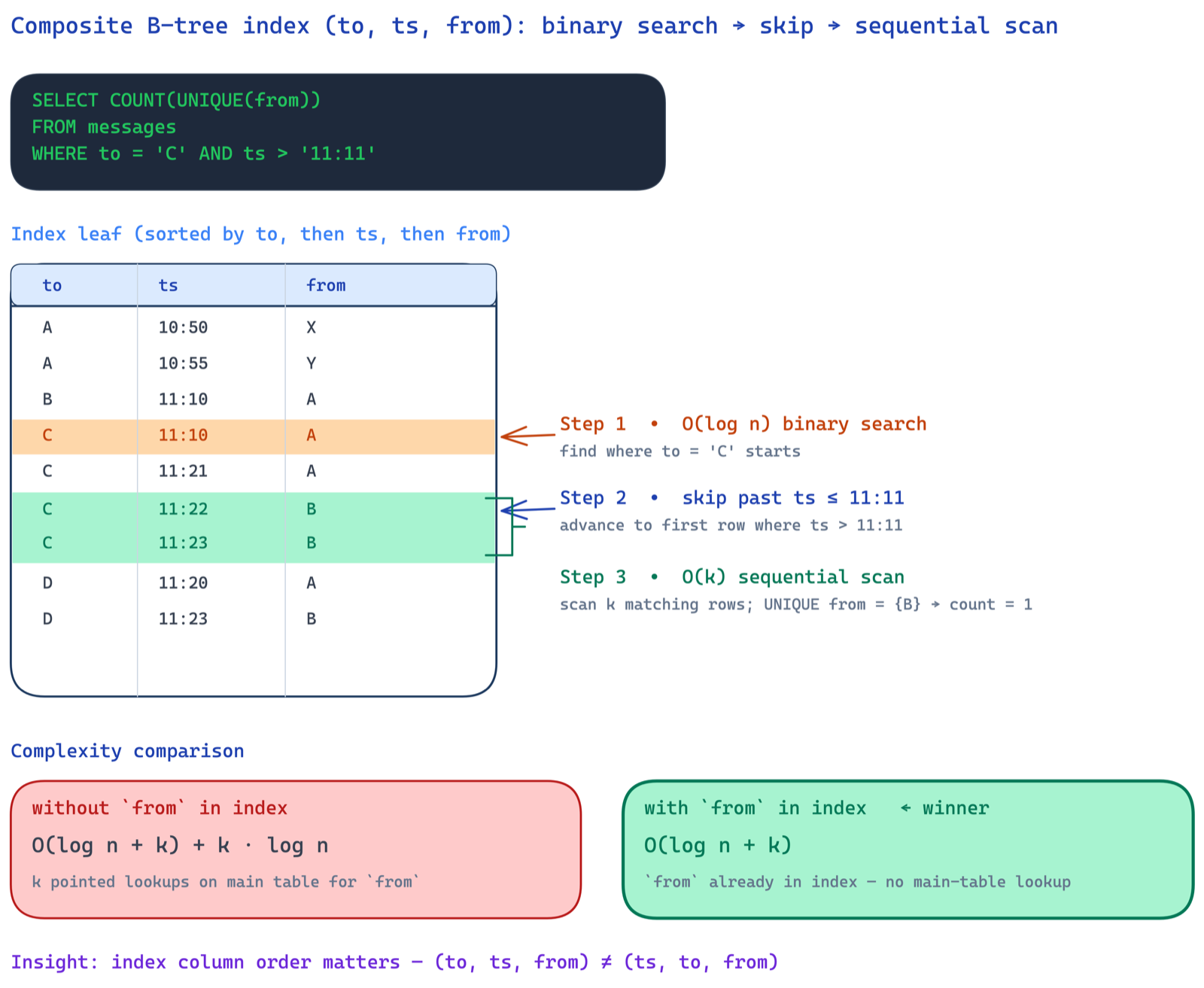

the fix is a composite index on (to, timestamp, from). now when you fire WHERE to = C AND timestamp > 11:11:

- O(log n) — binary search to find where

to = Cstarts - skip — within C's entries, jump to where

timestamp > 11:11 - k sequential reads — scan only the matching entries

total: O(log n + k) where k is the number of matching rows. no set intersection. no pointed lookups on the main table for the from column (because it's baked into the index). done.

now compare:

- without

fromin index:O(log n + k) + k × O(log n)— for each of the k matched rows, you do a pointed lookup on the main table to get thefromcolumn for theCOUNT(UNIQUE(from)). - with

fromin index:O(log n + k)— everything you need is right there in the index.

the overhead? just 8 extra bytes per index entry for storing from. even with 10 million messages, that's 80 MB. peanuts compared to the compute savings.

and yes, this index is hyper-optimized for this one query. that's perfectly fine. it's not an anti-pattern to optimize for your most critical query. as long as it works for your product and business, do it.

also — the order of columns in the composite index matters. if you did (timestamp, to, from) instead, the first column would match a huge range of timestamps, then you'd have to linearly scan through all of them to filter by to. completely defeats the purpose. the index should be ordered to match your query's selectivity — to first (narrows to one user), then timestamp (narrows to recent messages), then from (avoids main table lookup).

approach 2: pre-computed with redis

the on-the-fly approach works at decent scale. but if you want to pre-compute, here's how it shapes up.

you need a way to know whether a message was actually delivered or not. websockets help — if the user is connected, the message is delivered live and doesn't count. if the user is offline, the message is undelivered and that's when the counter should bump. so you need an online/offline service that knows the websocket state of every user.

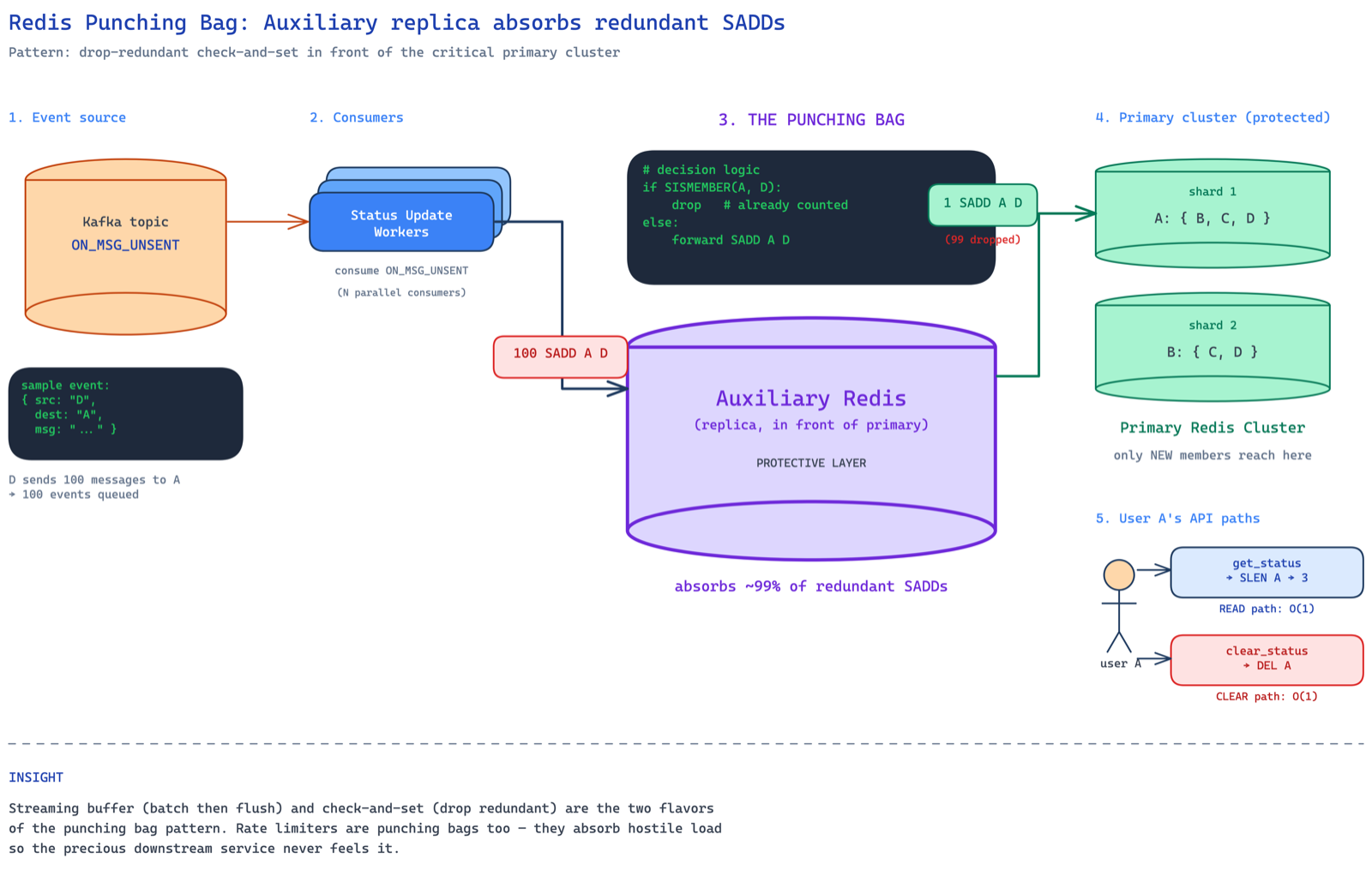

whenever a message can't be delivered in real-time (user is offline or inactive), an ON_MSG_UNSENT event hits kafka partitioned by receiver ID. workers consume it and fire SADD on redis — adding the sender to the receiver's set.

so if D sends A 10 messages, the worker fires SADD A D ten times. but since it's a set, D only appears once. the count? just SLEN A. returns 3 if A has messages from B, C, and D.

when A taps the message icon: DEL A. counter reset. done.

the read path is a single SLEN call. the clear path is a single DEL. both O(1). the write path is SADD calls from workers.

the punching bag pattern

now here's the micro-optimization. if D sends A 100 messages, the worker fires SADD A D a hundred times. but after the first one, D is already in the set. the remaining 99 are redundant operations — they don't change the data but they still consume redis resources.

at peak load, imagine 90% of your commands are redundant. your redis cluster is both read-heavy (polling for status) and write-heavy (ingesting events). every unnecessary command adds up.

the punching bag pattern is about protecting your critical component. you add an auxiliary redis replica in front. before firing SADD on the primary, you check the replica — is this member already in the set? if yes, skip. if no, write to primary.

this isn't your day-zero solution. it's a day-n optimization when you observe that a huge percentage of writes are redundant. the pattern shows up everywhere — rate limiters are essentially punching bags that absorb load before it hits your core system.

two flavors of the punching bag:

- streaming buffer — batch and buffer writes before they hit the db

- check-and-set — discard redundant operations before they reach the critical component

key takeaways

- start with a single SQL query. at small to medium scale,

SELECT COUNT(UNIQUE(from)) FROM messages WHERE to = ? AND timestamp > last_read_atliterally is the system. don't overcomplicate. - composite indexes are surgical tools. column order is everything.

(to, ts, from)makes the query O(log n + k). reorder the columns and you've built a beautifully indexed full table scan. - including columns in the index avoids main table lookups. 8 extra bytes per row to skip k pointed lookups is one of the cheapest trades in databases.

- redis pre-computation is a day-n move. only when the on-the-fly query hits its ceiling.

- the punching bag pattern saves your critical component from doing work it doesn't need to do. drop redundant ops before they hit the core.

and the bigger picture — identify the read path and write path, optimize them independently. for reads: indexes, replicas, caches, pre-computed counts. for writes: batching, buffering, punching bags to absorb redundancy.

nothing is best. everything depends on the usecase. and the answer almost always lies in the gray area — not purely in engineering, not purely in product, but in that sweet spot where both work together.